metric · 01

MCP-native

Exposes capture, query, and recall as MCP tools. Any MCP-compatible agent (Claude Code, Codex CLI, custom harnesses) can write to and read from the same memory without adapter glue.

case study · 06

Capture a thought. Ask in natural language. It surfaces what you meant.

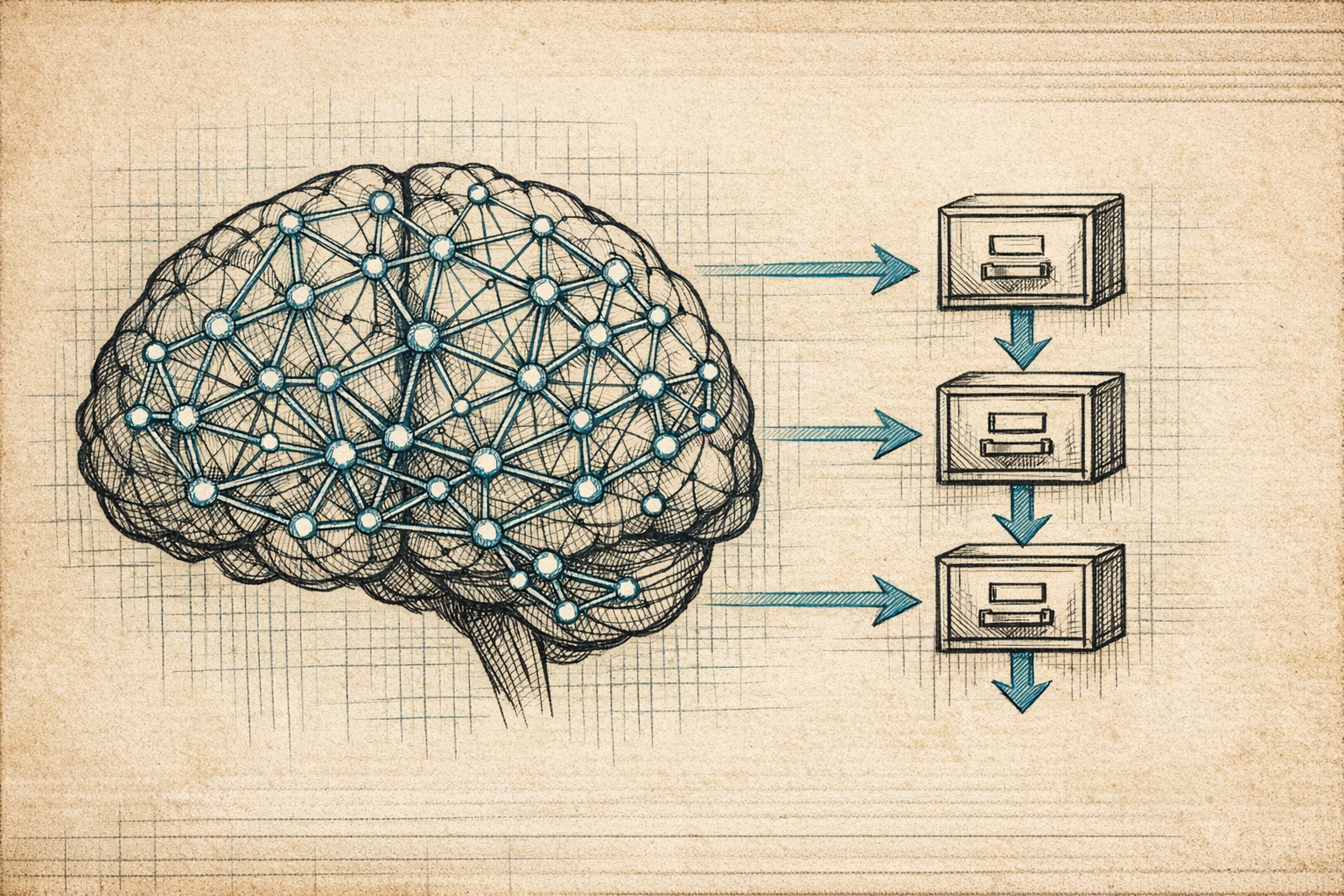

Semantic memory layer for AI agents. Postgres + pgvector + an MCP server. Drop a sentence, it gets embedded, classified, and filed. The next agent that needs the context gets it without being told to look.

~ what shipped ~

metric · 01

MCP-native

Exposes capture, query, and recall as MCP tools. Any MCP-compatible agent (Claude Code, Codex CLI, custom harnesses) can write to and read from the same memory without adapter glue.

metric · 02

Self-classifying

Captures get auto-tagged on insert. No upfront schema, no taxonomy maintenance. The classification model evolves with the corpus instead of fighting it.

metric · 03

Hybrid retrieval

Vector similarity (pgvector) for semantic recall, full-text BM25 for exact-term match. A reranker decides which signal wins per query. Both are wrong sometimes; the combination is right more often.

Most AI products call their context window "memory." It isn't. It's a buffer that gets cleared between sessions and frequently mid-session. Real memory persists, gets queried, returns relevant pieces, and gets updated by the agent itself when new things matter.

Captures are plaintext sentences with optional metadata. On write, the system embeds them, classifies them into auto-discovered tags, and stores both the vector and the lexical form. On read, queries hit both the vector index and the BM25 index, results get reranked, the top N return.

Exposing this as an MCP server means any compatible agent (Claude Code, Codex CLI, custom harnesses) can read and write the same memory without bespoke adapters. The memory persists across sessions, across tools, across the whole stack. One memory, many agents.

Not a knowledge base. Not a vault. Not a Notion replacement. The interface is "store this thought" and "what do I know about X." Everything else is the agent's responsibility.

↳ the agent that needs the context gets it without being told.

~ on the workbench ~

~ counterfactual ~

Without it: every agent session starts with amnesia. Context the human surfaced last week is invisible to the agent today, so the human surfaces it again. The 'memory' layer most agent products ship is an in-context window plus prayer. Real memory is a database with hybrid retrieval; everything else is just very expensive forgetting.

~ got something like this on the bench? ~

/ open-brain / built by hand / shipped to a working URL /